How to Validate an AI Product Idea in 30 Days

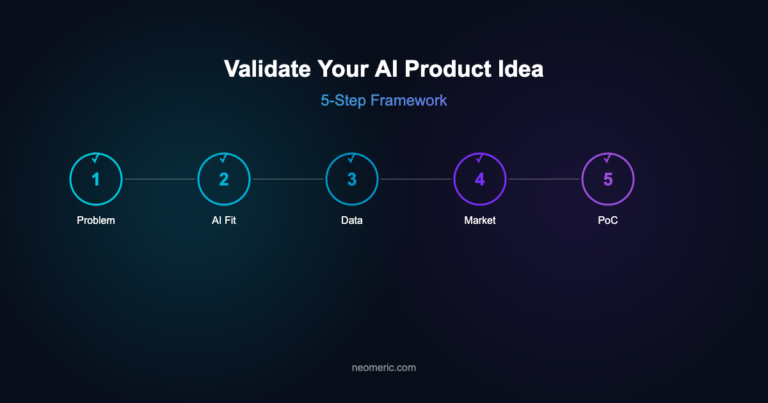

Most failed AI products don’t fail because the model was bad. They fail because nobody stopped to check whether the problem was worth solving, the data was accessible, or the unit economics survived contact with GPU bills. According to Pertama Partners’ 2026 AI Project Failure Statistics, over 80% of AI projects never reach production. Validating an AI product idea is different from validating a SaaS idea — you’re validating a problem, a user, a dataset, a workflow, and the economics of inference. This 30-day checklist walks through the exact steps we run with founders inside our AI Product Incubation engagements.

If you do nothing else, do these four things before you write a line of production code: confirm the problem with 10+ user conversations, prove the data exists and is legally usable, run a smoke test for willingness to pay, and model your cost-per-inference against your price point. Everything below expands on that core.

Week 1: Problem and customer (Days 1–7)

Your goal this week is to stop believing your own pitch and start hearing the problem in your customers’ own words.

- Write the problem in one sentence. Not the solution. The problem. “Mid-market ops teams spend 6 hours a week reconciling invoices across three systems” is a problem. “An AI agent that reconciles invoices” is a solution dressed up as one.

- Define a narrow ICP. Role, company size, industry, and the specific workflow they own. If your answer is “product managers” or “small businesses,” it’s too broad. “Series B SaaS product managers who own activation metrics” is workable.

- Book 10 discovery calls. 20–30 minutes each. Ask about the last time they hit the problem, what they did, and what it cost them in time or money. Do not demo. Do not pitch.

- Score the pain. After each call, rate frequency (daily/weekly/monthly), severity (annoying/expensive/blocking), and whether they’re already paying to solve it — hacks, spreadsheets, a junior employee, a competitor’s product. If fewer than 6 of 10 rate it severe and recurring, pick a new problem.

Week 2: Data and technical feasibility (Days 8–14)

This is where most non-AI validation frameworks fall short. An AI product lives or dies on data.

- Map the data you need. List every input your model requires: training data, fine-tuning data, retrieval data, and runtime context. For each, write down where it lives today, who owns it, and how you’d get to it.

- Check legal and consent. Is the data covered by existing terms? Does GDPR, HIPAA, or the EU AI Act change what you can do with it? If you’re training on customer data, you need contractual permission — assume you don’t have it until a lawyer says otherwise.

- Prototype the worst-case prompt. Before you build anything, take 20 real examples of the task and run them through a frontier model with a naive prompt. If GPT-class output is already 80% of the way there, that’s your floor. If it’s 20%, you have a genuine moat opportunity — or an impossible problem. Know which one.

- Estimate cost-per-task. Token counts times model price, plus any retrieval and evaluation overhead. Multiply by expected usage. If your cost-per-task exceeds 10% of what a customer would pay per task, your margins are already in trouble.

For a deeper dive on this trade-off, see our earlier post on when to build your own model versus buy a foundation model API.

Week 3: Willingness to pay (Days 15–21)

Interest is cheap. Money and calendar time are the only real signals.

- Ship a landing page. One page, one promise, one CTA. Collect email and a qualifying question. Drive 500–1,000 visitors through paid or community channels. Below a 3–5% conversion rate on a well-targeted audience is a yellow flag.

- Run a pre-sell. Offer a paid pilot, a design-partner slot, or a refundable deposit. A letter of intent is fine; a signed PO is better; a wire is best. Five founders saying “this is awesome” is worth less than one saying “here’s my credit card.”

- Run a fake door or Wizard of Oz test. Let five users complete the workflow end to end, with humans doing the AI work behind the curtain. Measure whether they come back. Retention at this stage predicts retention at scale better than any survey.

Week 4: Go-to-market and decision (Days 22–30)

- Model the unit economics. CAC from your landing page test, gross margin after inference and eval costs, expected retention from the Wizard test. If LTV:CAC looks worse than 3:1 with conservative assumptions, revisit pricing or ICP before building.

- Identify your distribution edge. Do you have a warm channel — an existing audience, an integration partner, a community? AI products with no distribution advantage get crushed by the incumbent that bolts AI onto an existing workflow. If you can’t name a channel, that’s a problem to solve before a product to build.

- Write the kill-or-continue memo. One page. Problem score, data verdict, economics verdict, pre-sell results, and the single biggest risk. Share it with two people you trust who will actually push back.

The 30-day decision criteria

Kill it if any of these are true: fewer than 6 of 10 users describe the problem as severe and recurring; you cannot legally access the data you need; cost-per-task exceeds 10% of price; or your pre-sell generated zero paid commitments. Otherwise, you have earned the right to start building — and a much clearer brief for what to build first.

We run a version of this exact checklist inside our AI Product Incubation service, and we’ve seen it save founders six figures in wasted build costs. For a longer read on what happens after validation, see our post on the AI product scaling checklist.

Ready to stress-test your AI product idea? Book a 30-minute incubation call — bring your problem statement and we’ll pressure-test it against this framework, live.